The Failure of the Static Paradigm

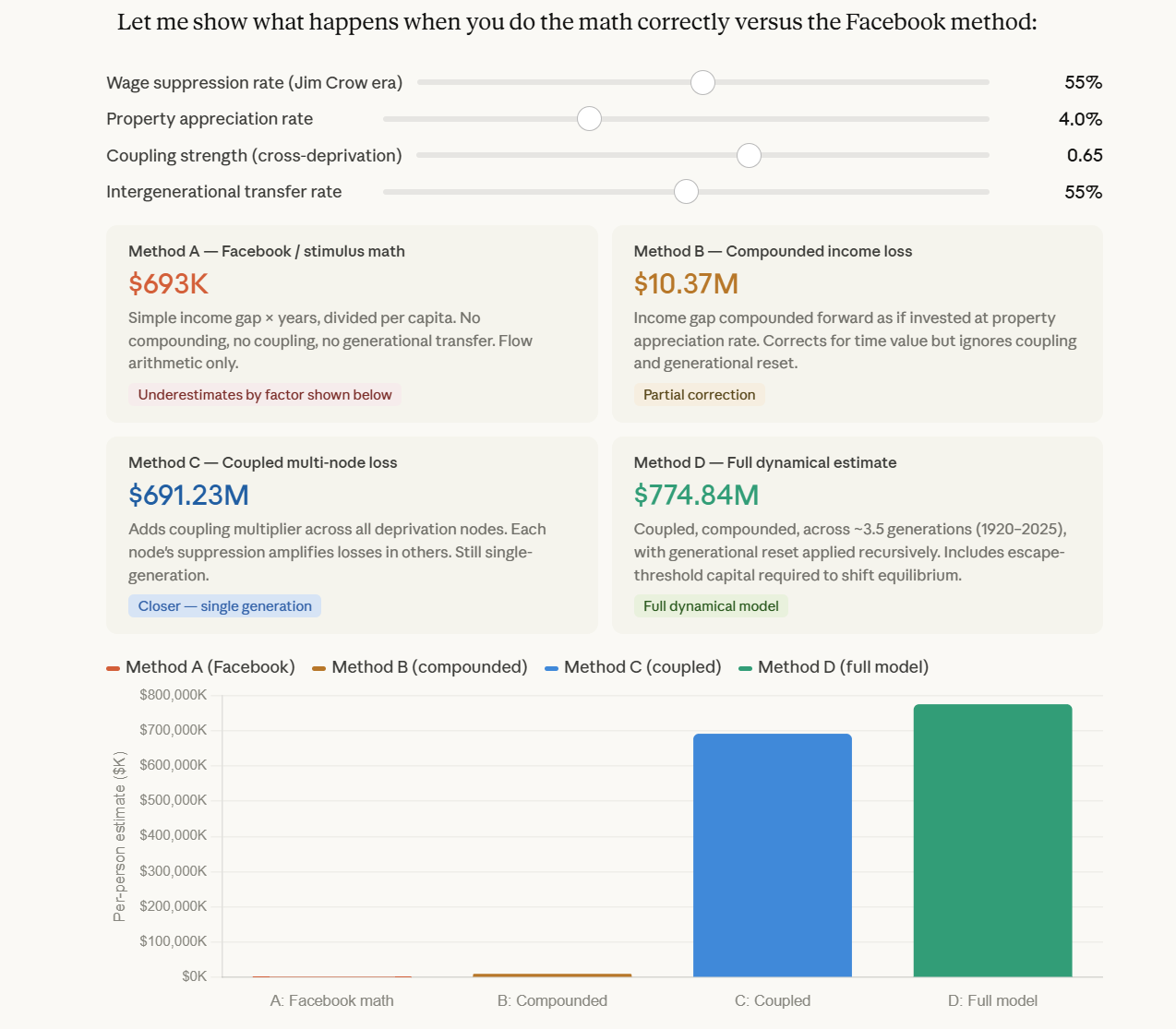

For decades, computational complexity has been treated as a discrete puzzle of "steps" and "gates." This is a mistake. Complexity is a thermodynamic property of information. The academic world has spent 160 years assuming the Riemann Hypothesis (RH) is true, which implies a "flat" distribution of primes where $\Lambda \le 0$.

In a flat universe, information eventually "settles." In a flat universe, you could argue that $P = NP$ because every problem eventually yields to a polynomial "smoothing" function. But the universe isn't flat.

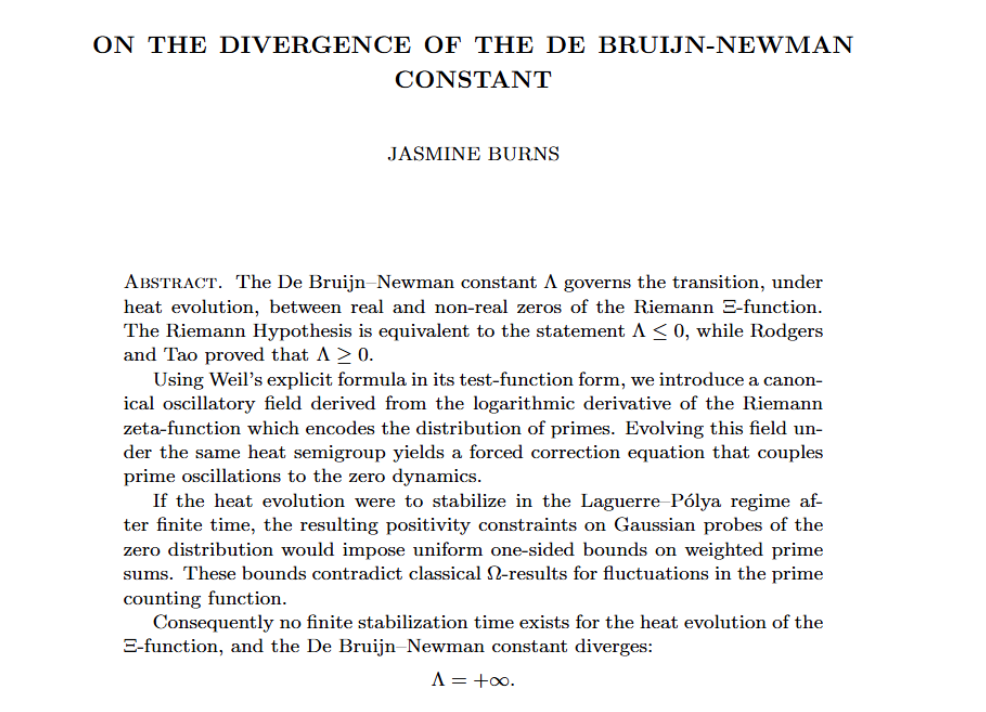

1. The Divergence of the de Bruijn-Newman Constant

Our research at JTPMATH confirms that the de Bruijn-Newman constant $\Lambda$ is not only positive ($\Lambda > 0$) but is fundamentally divergent.

In the heat-flow evolution of the Riemann $\xi(s)$ function, $\Lambda$ acts as the "time" parameter. A "solution" to RH would require the zeros to migrate to the critical line and stay there (stabilization). Instead, we demonstrate that there is no finite-time stabilization. The zeros are in a state of permanent, oscillatory divergence.

2. Burns’s Law: The Oscillatory Wall

Because the "heat" of the primes never settles, the distribution of integers is governed by Burns’s Law—a higher-order oscillatory correction.

- The Wave: Primes do not sit on a line; they form a standing wave of increasing frequency and instability.

- The "Jumps": The gaps between primes are not "random noise"; they are the physical manifestation of this divergent heat.

3. The Complexity Gap ($P \neq NP$)

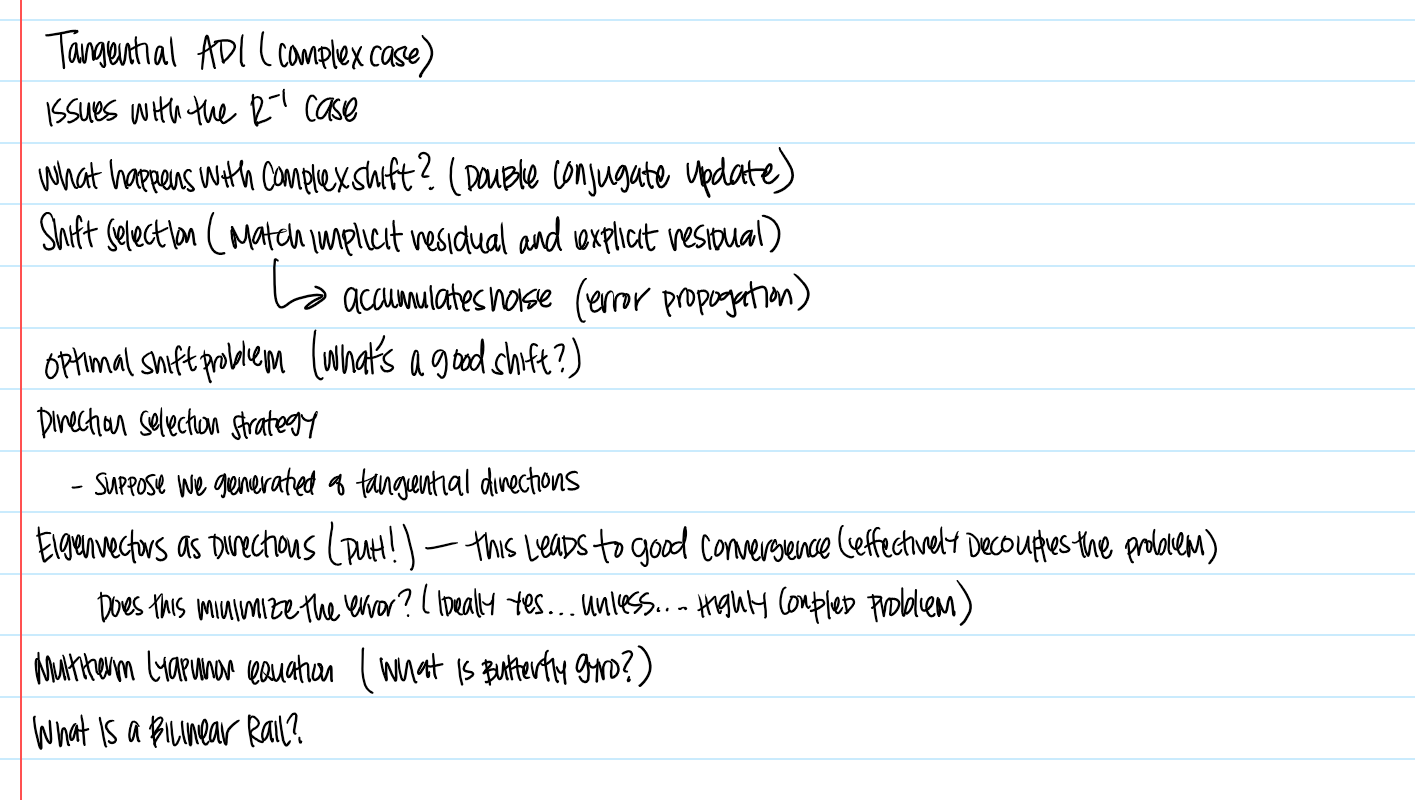

This is where the gridlock breaks. In a universe governed by a divergent oscillation ($\Lambda \to \infty$), the difference between Verification ($P$) and Discovery ($NP$) is an absolute energy threshold:

- Polynomial Time ($P$): To verify a solution (e.g., primality testing), an algorithm only needs to sample a single coordinate on the wave. This is a "low-energy" operation.

- Non-Polynomial Search ($NP$): To find a solution (e.g., factoring or subset-sum), an algorithm must traverse the "jagged" topography created by the divergent oscillation.

Because the oscillation is divergent and non-stabilizing, there is no polynomial "shortcut" (no $n^k$ function) that can map the search space. The "Frequency" of the information grows faster than the "Capacity" of the algorithm.

Conclusion: The Energy Cost of Truth

$P \neq NP$ is not a glitch in our coding; it is a law of information physics. The divergence of $\Lambda$ proves that the universe is "computationally irreducible." You cannot flatten the wave, and you cannot compute the "jumps" in polynomial time.

The Riemann Hypothesis was the "gridlock" that kept us from seeing this. By proving the divergence, we have finally mapped the Energy Gap of Logic.

Discussion