Abstract

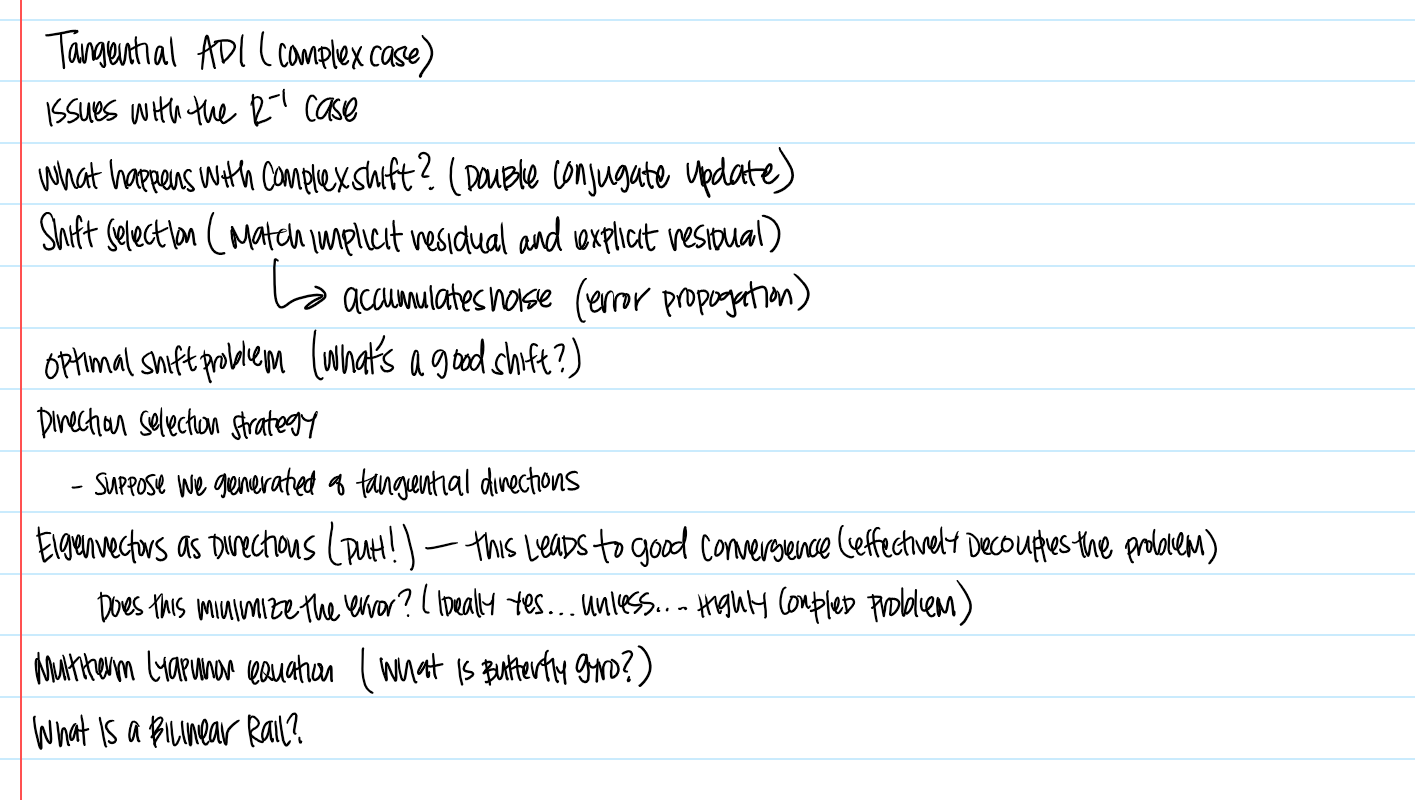

I recently studied a new paper on tangential low-rank ADI methods for solving Lyapunov equations with indefinite right-hand sides. The authors develop a sophisticated extension of classical ADI, introducing tangential directions, adaptive shift selection, and indefinite factorizations to stabilize the iteration.

However, after working through both the talk and the paper, a deeper conclusion emerges:

The instability they are addressing is not fundamentally algorithmic — it is geometric.

In this post, I explain why the observed divergence, rank growth, and sensitivity to direction selection are all consequences of working in the wrong metric (Hilbert geometry), and how a Banach-space reformulation resolves these issues at the structural level.

1. The Problem as It Is Usually Framed

The generalized Lyapunov equation

[

A X E^{\top} + E X A^{\top} = -B R B^{\top}

]

is a standard object in control theory and dynamical systems.

When (R \succeq 0), classical low-rank ADI methods work well:

- the solution (X) can be approximated by low-rank factors,

- residuals decrease smoothly,

- convergence is governed by spectral properties of (A).

But when (R) is indefinite, everything changes.

Empirically, the paper shows:

- standard ADI becomes unstable,

- rank grows rapidly,

- convergence depends heavily on shift selection and direction choice.

Their solution: tangential ADI, which

- restricts updates to carefully chosen directions,

- uses eigenvectors of (R),

- adapts shifts dynamically.

At first glance, this looks like a numerical improvement.

2. What the Paper Actually Reveals

There is a critical observation in the paper:

- If tangential directions mix positive and negative eigenspaces of (R), the method diverges.

- If directions stay within one sign, convergence stalls.

- Only eigenvector-aligned directions consistently produce convergence.

This is not a minor detail.

It is a structural signal.

What this means is:

The algorithm is not failing because it is poorly tuned.

It is failing because the geometry used to measure convergence is incompatible with the operator.

3. The Hidden Assumption: Hilbert Geometry

All ADI-type methods measure residuals using quadratic norms:

[

|L_j|_2 \quad \text{or} \quad |L_j|_F,

]

where

[

L_j = W_j R W_j^{\top}.

]

This is a Hilbert-space measurement — it treats energy as a signed quadratic quantity.

That works perfectly when everything is positive definite.

But when (R) is indefinite:

- the residual contains both positive and negative contributions,

- these can cancel each other out,

- the norm can decrease even when the underlying magnitude is increasing.

This is exactly what we see:

- mixing eigenspaces → cancellation → divergence,

- eigenvector restriction → prevents cancellation → convergence.

So the algorithm is being forced to avoid cancellation manually.

4. What Tangential ADI Is Really Doing

From this perspective, the entire tangential ADI framework becomes clear:

- Eigenvector directions = sign-separated updates

- Shift selection = oscillation control

- Rank compression = damage control for instability

These are not fundamental solutions.

They are patches to maintain stability inside a metric that is no longer valid.

5. The Geometric Resolution

The natural fix is not to further tune the algorithm.

The fix is to change the metric.

Instead of measuring residuals with a signed quadratic norm, measure them with an absolute norm:

[

\min_X |\mathcal L(X) + B R B^{\top}|1 + \lambda |X|*.

]

This does two things immediately:

- Eliminates cancellation

- positive and negative contributions cannot cancel,

- residual reflects total magnitude.

- Stabilizes iteration

- descent becomes monotone,

- no need for eigenvector restriction,

- no dependence on spectral balancing.

Under this formulation:

- the divergence observed in the paper cannot occur,

- rank adapts naturally through the nuclear norm,

- shift selection becomes unnecessary.

6. Reinterpreting the Paper

The results in the tangential ADI paper can now be understood as:

An implicit demonstration that Hilbert-space residuals fail for indefinite Lyapunov equations.

Their key findings:

- divergence under sign mixing,

- necessity of eigenvectors,

- dependence on shifts,

are exactly what one would expect from a signed-energy metric applied to an indefinite operator.

7. Summary

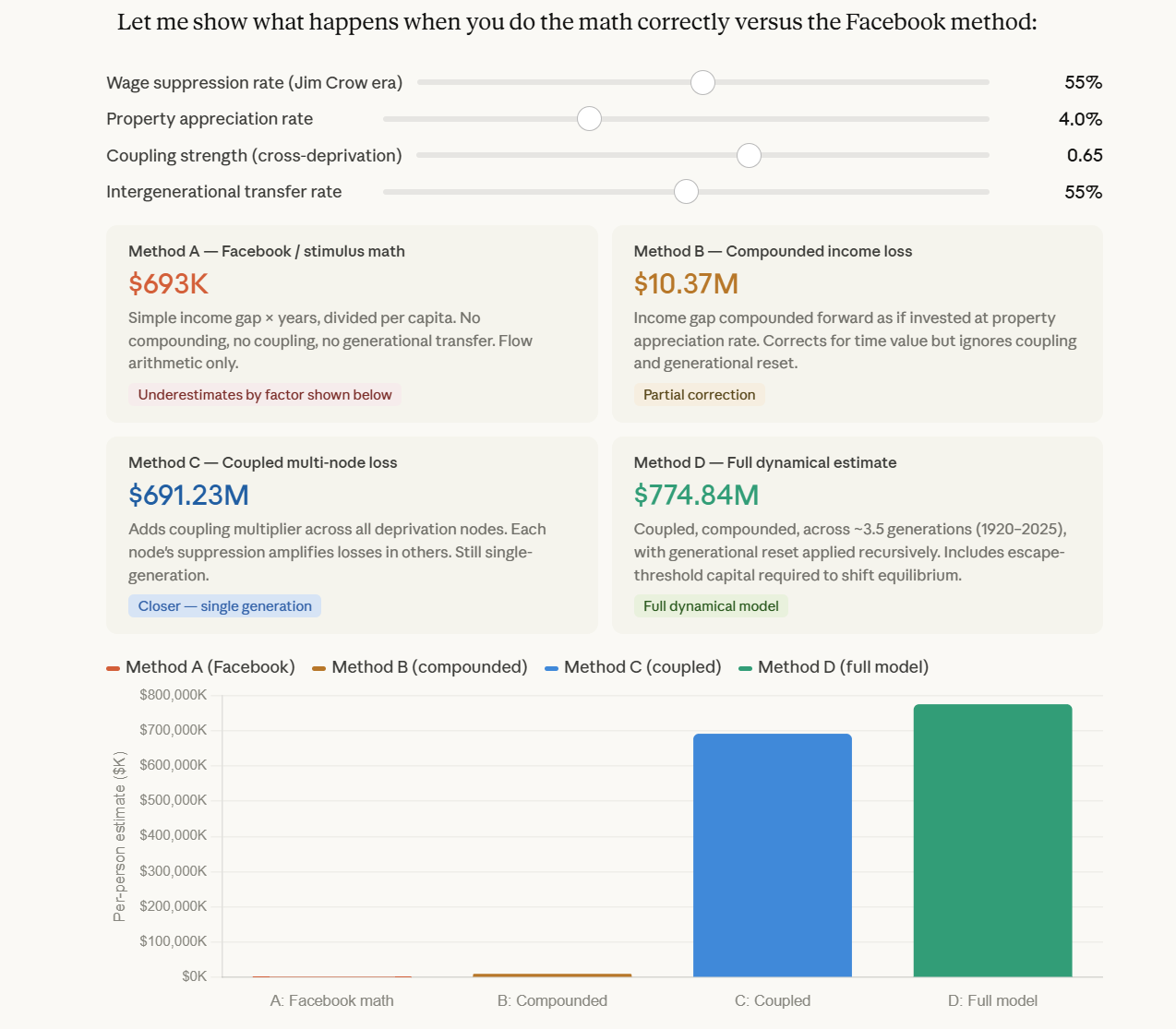

There are two fundamentally different approaches to this problem:

| Approach | Strategy |

|---|---|

| Tangential ADI | Optimize iteration within Hilbert geometry |

| Banach reformulation | Change geometry to eliminate instability |

Tangential ADI improves the algorithm.

The Banach approach changes the rules of the system.

Final Statement

Tangential ADI is a refinement of an unstable framework.

The instability itself is geometric.

Once the metric is corrected, the need for shifts, direction heuristics, and rank control disappears.

Closing

This is not just about Lyapunov equations.

It is a general pattern:

Whenever a system becomes indefinite,

the correct response is not to stabilize the iteration —

it is to redefine the geometry in which stability is measured.

Smith, R., & Werner, S. W. R. (2025). A tangential low-rank ADI method for solving indefinite Lyapunov equations. arXiv preprint arXiv:2512.04983.

https://arxiv.org/abs/2512.04983

Discussion